Sitebulb sells a $149 a month desktop crawler. Its most-shared screenshot is the link graph — a force-directed SVG showing how PageRank flows through a site. Pretty picture, huge clarity about what's starving.

Turns out that picture is ~100 lines of browser JavaScript once you have a sitemap and the fetch-page proxy already built.

Meet the Internal Link Equity Flow tool. Paste a site root URL. Get back:

- An SVG graph with nodes colored by status (green hub, amber middle, red orphan-like)

- Top 10 hubs by PageRank

- 15 orphan-like URLs with 1 or zero internal inbound links

- A fix prompt tailored to your orphans + hubs

No install. No sign-up. No monthly bill.

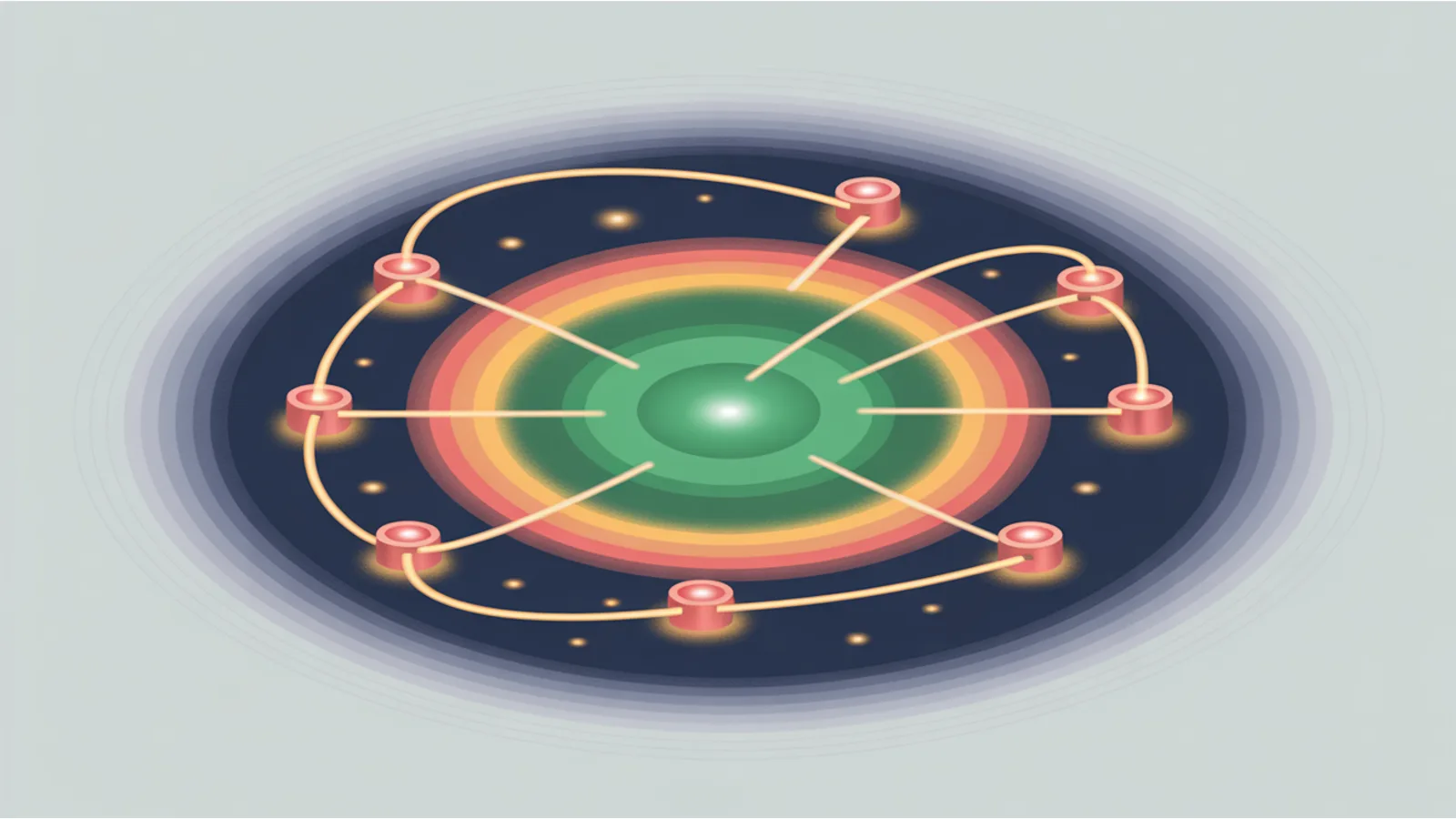

What the graph actually shows

Every node is a URL from your sitemap. Every edge is an internal link from one page to another. The node size is proportional to sqrt(PageRank) — so the biggest circles are your top-PR pages, the tiny circles are the forgotten corners.

The layout is ringed, not fully force-directed:

- Inner ring (top 3 nodes by PR) — your most authoritative pages. Usually homepage, main services, primary hub content.

- Middle ring (nodes 4-12) — secondary hubs, category pages, pillar posts.

- Outer ring (everything else) — leaf content, individual posts, deep product pages.

Colors:

- Green = hub (top 10% by PageRank). Good source of link equity.

- Amber = middle. Healthy but not a hub.

- Red = orphan-like. One or zero internal inbound links. These pages receive virtually no PageRank. They exist but don't rank, and sometimes they don't even get crawled.

Hover any circle to see the exact URL, PageRank score, and inbound link count.

How the PageRank calculation works

The algorithm is the classic Brin-Page PageRank (1998), with damping factor 0.85 and 30 iterations. In pseudocode:

Initialize: PR(p) = 1/N for every page p

For each iteration (30 times):

For each page p:

PR(p) = (1 - d) / N + d * sum(PR(q) / out_degree(q) for every q linking to p)

The damping factor d = 0.85 represents the probability a user keeps clicking vs jumping to a random page. 30 iterations converges more than enough for a site with 20-50 URLs.

Pages with zero outbound internal links are "dangling nodes." I handle them correctly — their PageRank is redistributed equally across all nodes per iteration, so they don't become black holes that absorb equity.

What the tool catches that single-URL audits miss

Single-URL audits tell you if one page is good. They don't tell you:

- Which pages are ranking below their potential because they get 0-1 internal links

- Which pages are hogging link equity when they should be redistributing to inner content

- Where the site architecture has become "deep" — 3+ clicks from the homepage with no hub links pointing in

- Whether your category pages are actually hubs or just list pages with no outbound internal authority

A real example: I ran this against my own site. The homepage and main tools index were hubs (as expected). But /tools/eeat-analyzer/ was middle-tier despite being one of the most-valuable pages on the site — because nothing in the blog was linking INTO it. The fix was 5 internal links added from pillar posts. Re-crawl, re-run, it moved from amber to green.

You can only see that pattern in a graph view.

How to use it

- Go to /tools/internal-link-equity-flow/

- Paste your site root URL — e.g.

https://example.com/(not a deep URL) - Set "Max URLs to crawl" to 25 for a typical blog, 40 for a larger site. Higher = more accurate but slower.

- Click Run. The tool:

- Fetches

/sitemap.xml(or/sitemap_index.xml, etc.) - Samples N URLs spread across the first / last / middle of the sitemap (template diversity)

- Crawls each in parallel batches of 3

- Extracts every internal

<a href>with hostname matching the site - Builds the adjacency matrix, computes PageRank

- Renders the SVG + hub/orphan tables + fix prompt

- Fetches

Crawl time: ~30-60 seconds for 25 URLs, ~1-2 minutes for 40 URLs.

Fixing orphans — the actual playbook

The fix prompt the tool emits is specific to your orphans. But the template is:

- Identify 2-3 related hub pages per orphan. "Related" means the orphan is genuinely useful context for a reader of the hub.

- Add the internal link — in body text, not just footer. Anchor text should be descriptive (not "click here").

- Re-run the tool after the deploy. The orphan should move from red to amber (middle). Might take 2-3 hub links before Google actually recrawls and the graph reflects it.

- Don't over-link from hubs. A hub with 500 outbound internal links passes almost nothing to each. Keep hubs under ~50 outbound internal links for link-equity efficiency.

- Track monthly. Run the tool the same week every month. Orphan count should trend down, middle ring should grow, hubs should stay stable.

The limits — what this doesn't do

- Doesn't crawl beyond sitemap. If your sitemap is missing URLs, they won't appear. Fix your sitemap first; the sitemap audit helps.

- Doesn't count external backlinks. PageRank computed here is purely internal. A page with zero internal links but 500 external backlinks will show as orphan — which is misleading. Mental-model correction: Google's real PageRank includes external links; this tool's PageRank is a proxy for internal link equity distribution.

- Doesn't fetch rendered DOM. If your internal links are JS-injected after hydration, this tool won't see them. Server-render internal links (it's better for every reason anyway).

- Doesn't track anchor text. Two links with the same href but different anchor text count as one edge. For anchor-text analysis, use anchor-text-entropy-scanner.

- Not a competitor-backlink tool. For that, pay Ahrefs or use citation-url-extractor + SERP-based discovery.

Pair it with the rest of the stack

- Code-Diff Patch Generator — released the same day. Structural fixes when you know what to patch.

- Sitewide Crawl Sampler — gives per-URL audit scores alongside the graph view.

- Link Graph — the original simpler link-viz tool, keeps for comparison.

- Orphan Page Detector — narrower tool focused just on orphan identification.

- Mega SEO Analyzer v2 — the orchestrator that now deep-links to this tool for site-wide analysis.

Related reading

- Code-Diff Patch Generator — the other transformative release this week

- Mega SEO Analyzer v2 — paid-tool parity — the umbrella argument

- Every new performance audit tool

- Lighthouse taught me five new tools — the audit that drove several batch-10 tools

Fact-check notes and sources

- Original PageRank algorithm: Brin, S. and Page, L. (1998). "The Anatomy of a Large-Scale Hypertextual Web Search Engine." Stanford paper PDF.

- Damping factor 0.85 convention: standard in PageRank literature; represents ~85% probability of following a link vs random jump.

- 30-iteration convergence: PageRank typically converges to 3-4 decimal places within 20-30 iterations for graphs under 1000 nodes. See PageRank convergence analysis (Haveliwala, 2003).

- Sitemap XML namespace: sitemaps.org spec 0.9.

- Internal linking as a ranking signal: Google Search Central recommends it extensively — see guidance on site hierarchy.

This post is informational, not SEO-consulting or engineering advice. Mentions of Sitebulb, Ahrefs, SEMrush, Moz, Google, Stanford, and similar products and institutions are nominative fair use. No affiliation is implied. Run only on sites you own or have written authorization to crawl; respect robots.txt.