TL;DR. Generators save hours of reference-hunting and make the default pattern auditable. The output is a strong 80% starting scaffold — review + tune the remaining 20% to your specifics before committing.

The SEO Roadmap Generator is the audit you reach for when you already suspect a problem in this dimension and need a fast, copy-paste-able fix list. It reuses the same chrome as every other jwatte.com tool — deep-links from the mega analyzers, AI-prompt export, CSV/PDF/HTML download — but the checks it runs are narrow and specific to the dimension described above.

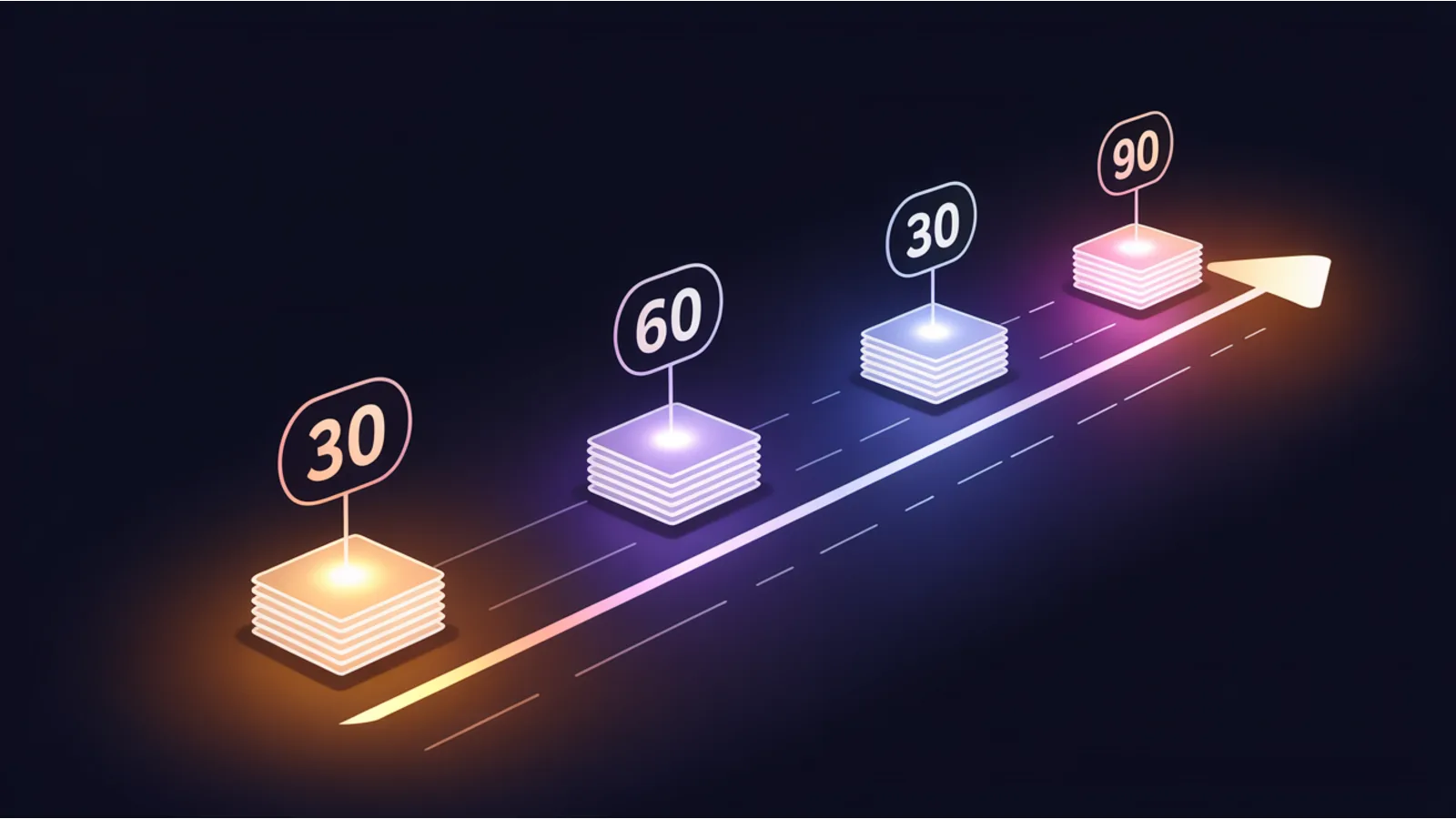

Paste a list of findings (one per line, optionally prefixed with [fail] / [warn] / [info]). The generator builds an Impact × Effort matrix, auto-sequences into a 30/60/90-day roadmap, and outputs a shareable plan for your team or client.

Why this dimension matters

Generators shortcut the "blank-page" problem. A drop-in template (robots.txt, sitemap, Dockerfile, Kubernetes manifest, llms.txt, JSON-LD scaffold) saves hours of reference-hunting and makes the default pattern auditable. The shipped output is never production-ready without review, but it's always a stronger starting point than an empty editor.

Common failure patterns

- Generated template copied verbatim without local tuning — every generator ships sensible defaults, but every real site has constraints the generator can't know (custom domains, staging hosts, legacy paths that must stay indexed). Review each directive; delete what doesn't apply.

- Generated config committed without a comment explaining the source — six months later nobody remembers why a particular directive is there. Annotate with a

# Generated from jwatte.com/tools/<slug>/ on YYYY-MM-DDline. - Treating the generator output as final rather than starter scaffold — the emitted file is an 80% default. The 20% is where your site's specifics live, and that's where review time belongs.

How to fix it at the source

Run the generator, take the output as a starting scaffold, then review every directive against your actual site: delete any that don't apply, tune any that have site-specific values, and commit with a comment linking back to the generator. Re-generate every 6–12 months — specs evolve (robots.txt now includes AI bots, manifest.json gained fields, Dockerfile best practices shift) and the generator tracks current best practice.

When to run the audit

- After a major site change — redesign, CMS migration, DNS change, hosting platform swap.

- Quarterly as part of routine technical hygiene; the checks are cheap to run repeatedly.

- Before an investor / client review, a PCI scan, a SOC 2 audit, or an accessibility-compliance review.

- When a downstream metric drops (rankings, conversion, AI citations) and you need to rule out this dimension as the cause.

Reading the output

Every finding is severity-classified. The playbook is the same across tools:

- Critical / red — same-week fixes. These block the primary signal and cascade into downstream dimensions.

- Warning / amber — same-month fixes. Drag the score, usually don't block.

- Info / blue — context only. Often what a PR reviewer would flag but that doesn't block merge.

- Pass / green — confirmation. Keep the control in place.

Every audit also emits an "AI fix prompt" — paste into ChatGPT / Claude / Gemini for exact copy-paste code patches tied to your specific stack.

Related tools in this family

- Mega Analyzer — run the orchestrator first — the generator is the fix, the orchestrator is the diagnosis.

- ai.txt Generator — generates ai.txt + llms.txt scaffolds.

- robots.txt Simulator — verify the generator's robots.txt matches what each bot actually sees.

- Monoclone Gen. — multi-locale page cloning from a template.

Fact-check notes and sources

- See the specific tool's footer for the canonical spec reference (Sitemaps.org, llmstxt.org, Docker reference, etc.).

- Treat the output as a known-good starting point; review + adapt to your site before committing.

This post is informational and not a substitute for professional consulting. Mentions of third-party platforms in the tool itself are nominative fair use. No affiliation is implied.