A site monitoring its AI citations notices a curious pattern. In Q3 2025, Claude 3.5 Sonnet cited their pricing-strategy article 8-12 times per week. In Q4, Claude 4 ships. By Q1 2026, the same article is cited 1-3 times per week — and Claude 4 Opus essentially never cites it.

The article didn't change. Claude changed.

Each new model release re-shuffles which content it considers high-quality, well-attributed, and worth retrieving. Sometimes the shift is intentional (new training data, paraphrase rewrite filters, freshness bias). Sometimes it's a side-effect of architectural changes. The result is the same: a page that was a top citation last quarter is invisible this quarter.

Most operators never notice. The drift is gradual, the model name keeps changing, and there's no easy dashboard showing the fall.

What the Model Preference Drift Detector does

You paste citation snapshots over time (one line per snapshot — model, date, citations). Need at least 3 snapshots. The tool:

- Groups snapshots by model family (Claude / GPT / Gemini / etc.).

- Computes per-family drift from oldest to newest snapshot.

- Classifies each family: significant decline (-50%+), decline (-20 to -50%), stable, rise, significant rise.

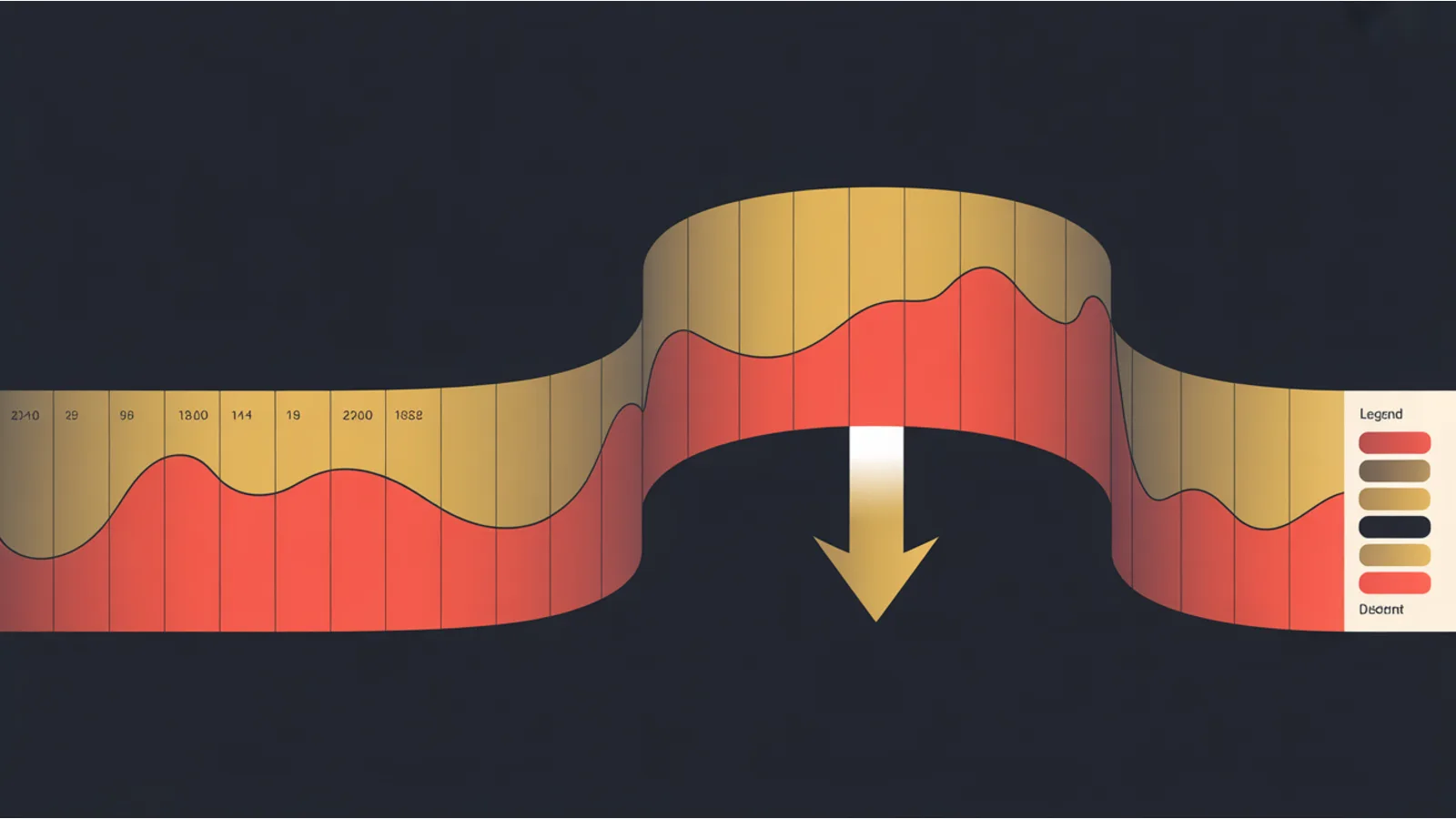

- Renders horizontal bar charts per family showing citation trend per model version.

- Emits an AI prompt with probable causes per declining family + counter-strategy + recovery timeline.

Drift classification thresholds

Significant decline (≥50% drop): respond. The model has actively de-cited you. Counter-strategy needed within 30 days.

Decline (20-50% drop): monitor closely. Could be sampling noise; could be early warning. Re-snapshot in 30 days to confirm trend.

Stable (±20%): noise band. Citation counts naturally vary by query basket and snapshot window.

Rise (20-50% growth): keep doing whatever's working. Identify what changed.

Significant rise (≥50% growth): the new model favors your content. Document the pattern; replicate on other pages.

The five most-likely causes of significant decline

When a model family de-cites you, the AI prompt asks for ranked probable causes:

1. Training corpus exclusion. The new model was trained on a different snapshot of Common Crawl or didn't crawl your domain. Check Common Crawl's index for your URL during the cutoff period.

2. Paraphrase rewrite filter. Newer models often add filters to paraphrase verbatim copies rather than quote them. Your content still influences the answer; you just don't get the citation. Hard to detect from outside; symptomatically appears as "answers cite competitors with weaker content."

3. Competitor displacement. A competing site started ranking higher on the same queries. Their citation grew, yours shrank. Run a comparative competitor citation audit.

4. Content staleness. Your dateModified hasn't been updated. Newer models weight freshness more aggressively. Solution: bump dateModified + visible "as of [year]" language.

5. Schema or attribution decline. Your page lost some structural quality signal (broken schema, removed citations) in a recent update. Audit page state vs the version that was cited.

The recovery cadence

Citation recovery happens at two timescales:

30-90 days for retrieval-time citations. Models like ChatGPT-User, ClaudeBot, OAI-SearchBot, PerplexityBot fetch live content at query time. Improvements to your page propagate within their crawl + index cycle.

6-12 months for next training-corpus inclusion. Pretraining-only models (CCBot, GPTBot, Google-Extended, Applebot-Extended) only see your content when they re-train. The current Claude / GPT version won't see your changes until the NEXT model. Plan accordingly.

The split matters for strategy: if you're losing citations from retrieval-time models, fast iteration helps. If you're losing them from pretraining-only models, you're playing a slower game.

The quarterly snapshot cadence

The minimum useful cadence:

- Quarterly snapshots for low-stakes verticals.

- Monthly snapshots for high-stakes (pricing-sensitive, news-cycle-dependent, AI-citation-dependent revenue).

- Per-model-release snapshots as a supplement — specifically capture data the day before and 30 days after each major model release (GPT-5, Claude 4.5, Gemini 2.5, etc.).

For each snapshot, use the same query basket (so the comparison is apples-to-apples) and the same N probes per query (so the count is directly comparable).

What this audit can't measure

The tool reports relative drift in citation counts. It doesn't tell you:

- Why exactly one model favors one page. That's opaque.

- Whether the citation drove revenue. Pair with the AI Answer Conversion Path Audit.

- Citation rank within the answer. Pair with the AI Citation Position Tracker.

- Click-through rate from citation to your page. Pair with the AI Referrer Log Parser.

Related reading

- AI Citation Position Tracker — measures rank within answer

- AI Referrer Log Parser — actual click-through from AI sources

- LLM Training Data Inclusion Audit — checks Common Crawl for your URLs

- Mega AEO Analyzer — full AEO sweep including drift dimension

Fact-check notes and sources

- Model release cadence + retraining timelines: Anthropic model timeline, OpenAI model index

- Common Crawl snapshot cadence: commoncrawl.org/news — monthly + occasional supplements

- Drift threshold (50% as "significant"): heuristic — there's no published industry baseline; 50% is a common pattern-of-practice threshold

This post is informational, not LLM-strategy-consulting advice. Mentions of OpenAI, Anthropic, Google, Common Crawl, Profound are nominative fair use. No affiliation is implied.