For two years I picked AI projects the same way I'd pick a weekend coding project: start from what's interesting to build, look for somewhere to apply it, discover halfway through that nobody wants the thing. Sometimes the project shipped anyway. Usually it quietly joined the pile of things that taught me something.

The lesson, in plain words, is that the interesting question isn't "what can I build with this?" It's "whose problem am I solving, how badly does it hurt them, and is anyone else already solving it?"

Those break into four questions. Answer them before you write anything. The projects that win in 2026 almost always answer them well; the ones that stall almost always fail one of them.

1. How visible is the pain from outside?

Problems sit on a hierarchy, and your odds depend on where on it you're standing.

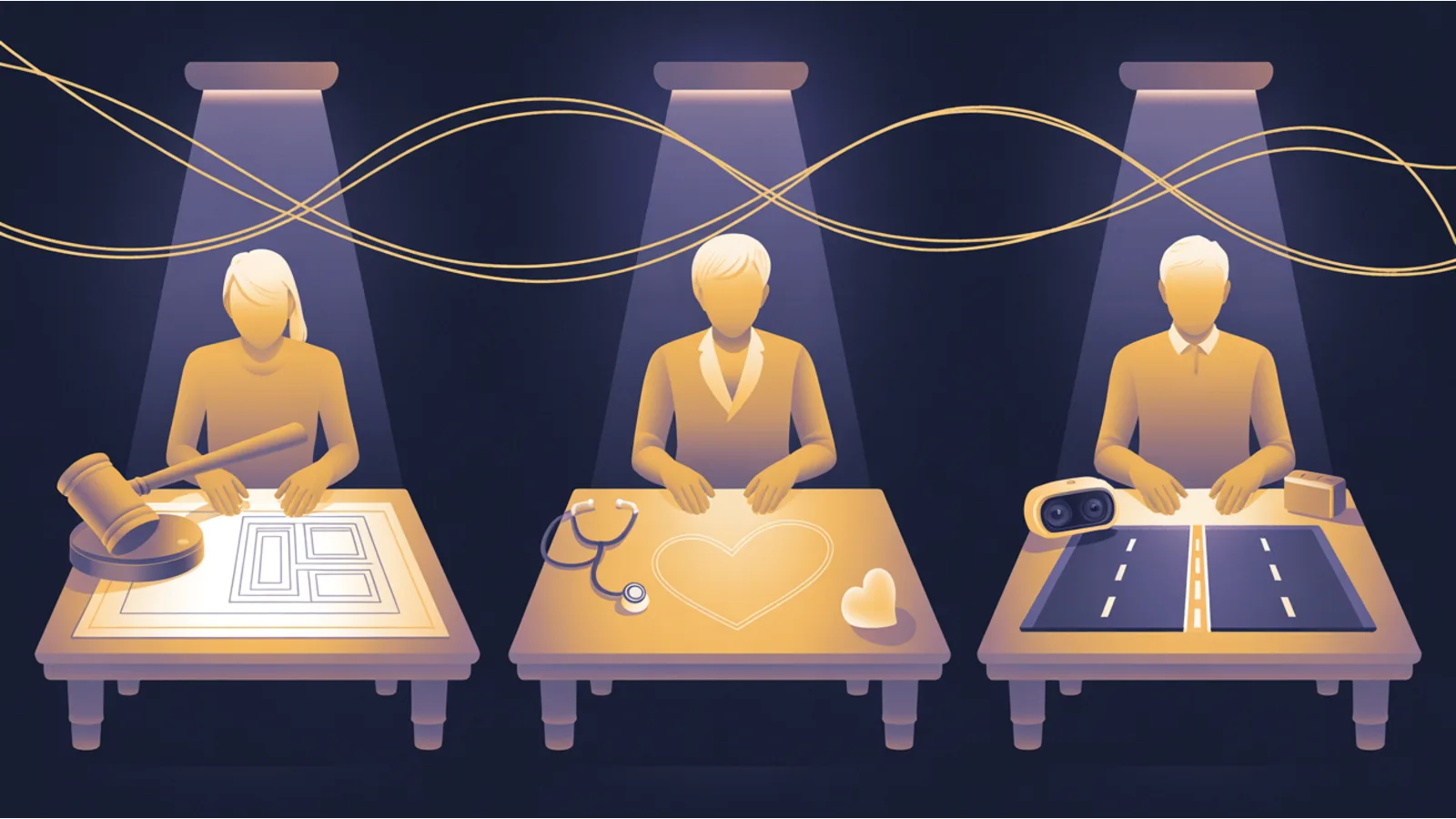

At the top: problems everyone can see, everyone has tried to solve. Consumer chatbots. General image generators. AI autocomplete for code. These spaces are crowded with well-funded teams. If you haven't already shipped, you're competing against people who did.

In the middle: problems visible to one industry, tackled by specialists in that industry. Legal contract review. Medical scribing. Sales call coaching. Competitive but winnable if your edge is real.

At the bottom, where the unfair advantages hide: problems visible only to the people trapped inside them, tackled by nobody. This is where most of the genuinely surprising products of this year have come from.

The way to tell the third category: describe the problem at a dinner party. If eyes glaze, you might be in the right place. Permit rejection letters. Medical discharge instructions. Road-surface assessments. Insurance claim intake. Compliance filings. Procurement reviews. These were uneconomical to solve for decades because software alone couldn't. AI changed the math. Most builders haven't looked because they're still looking where everyone else looks.

Worked example. Mike Brown is a California attorney. His friend builds backyard cottages, the small-scale housing California wants more of. Every permit application came back rejected. Not because the plans were wrong. Because one code citation was slightly off, or a local rule overrode a state rule in a way nobody had documented clearly. Fix it, resubmit, get rejected for something else. Months went by. Houses didn't get built.

San Francisco's median time to permit approval is over six hundred days. Fees reach $74,000 per unit. Roughly half of all projects risk losing financing before the first wall goes up. Mike built CrossBeam, upload your rejection letter and your plans, get a code-referenced remediation plan in about twenty minutes. He won first place at Anthropic's 2026 hackathon against several hundred working engineers.

He didn't out-engineer them. The permit office is the least glamorous surface in civic life. No VC is funding permit tech. No AI startup is interviewing permit applicants about their rejection letters. No competition. That's the pattern.

2. Are you the user, or are you sympathetic to the user?

There's a gap between understanding a problem intellectually and having lived inside it. The gap is where most "sounds right but nobody buys" failures come from.

Think about what a cardiologist knows about patient follow-up that a product team cannot learn. Studies show patients remember about half of what their doctor tells them during a visit, and a slice of what they do remember is wrong. Cardiologists know this is true because they've had the same phone call the next morning for a decade — "what exactly did you say about the medication?" They know which instructions get skipped because they're embarrassing. They know the exact fifteen minutes in which a patient still has context before it evaporates on the walk to the car.

You cannot interview your way to that. Not with the best product team in the world, not with a hundred user sessions. Postvisit.ai exists because a Brussels cardiologist, Michał Nedoszytko, had been standing inside the problem for ten years and finally had the tooling to do something about it. Working product in a week.

The signal to look for in yourself: problems whose texture you can describe in sentences nobody outside the field would know to ask about. Not the headline version. The texture, the weird stuff, the workarounds, the things that embarrass users into silence. If everything you can say about a problem is something anyone could look up on Wikipedia, you're sympathetic to the user. You're not the user.

3. Is the task, stripped of jargon, information processing?

Most people over-qualify this one. They assume "expert tasks" are non-automatable because expert. They aren't. A lot of expert work, stripped of its professional vocabulary, is pattern matching against a standard.

The road technician who built TARA ran this exercise on his own job. Before: drive a road, eyeball the surface, compare what you see to a known condition standard, assign a numeric grade. After: drive a road with a dashcam, upload the footage, receive a complete condition report in hours instead of weeks, surface type per segment, roughness index, repair cost estimates, an equity assessment flagging which communities benefit most from a fix. The hard-looking expertise turned out to be visual pattern matching followed by a table lookup. Both compressible.

Run the test on any expert job you know:

- Insurance adjuster, look at damage, compare to a schedule, attach a number.

- Compliance officer, look at contract text, compare to a policy, flag exceptions.

- Auditor, look at a transaction, compare to a rule, flag anomalies.

- Real-estate appraiser, look at a property, compare to comps, attach a value.

- Patent examiner, look at a claim, compare to prior art, grant or reject.

- Radiologist, look at a scan, compare to priors, attach a diagnosis.

Every one of them reads as look at something, compare to a standard. Every one is compressible. The jargon disguises the mechanism; the mechanism is the same mechanism AI does well right now.

4. Can you build a thin slice this week instead of a platform this year?

This is the one technical people trip over because they want to build a platform. Platform ambition kills weekend wins.

The projects that shipped and won during Anthropic's hackathon did exactly one thing well. Not twenty. CrossBeam doesn't replace a permitting system, it turns a rejection letter into a remediation plan. Postvisit.ai doesn't replace a hospital EHR, it turns one clinical record into a patient-facing companion. TARA doesn't replace infrastructure management, it turns dashcam footage into a condition report. Each one is a thin slice. One transformation that would otherwise cost weeks of human time.

Thin slices win because they're describable in a sentence, buildable in a week, and test the market idea without runway. If the slice gets used, the platform underneath can come later, paid for by customers, not by hope. If the slice doesn't get used, you learned the idea was wrong without having built scaffolding for an idea that didn't pay.

What to do with the four

Run your next five project ideas through them. Most won't score well on all four. That's the whole point. The two or three that do are worth a weekend. The ones that fail the first question are resume projects dressed up as products. The ones that fail the second are someone else's market. The ones that fail the third might still be interesting but won't compress the way current AI compresses things. The ones that fail the fourth are either scope problems you can fix or platform fantasies you shouldn't.

The difference between a good AI project and a bad one in 2026 is almost never the technical sophistication. It's the pre-build judgment. Spend more time there. Spend less time on frameworks, prompt libraries, and model selection, those are the easy parts now.

Tools that help at each step

- Step 1. Vetting whether a niche is already saturated: Keyword Inspection tells you which queries exist and who ranks for them; SERP Features tells you whether the top results are already feature-heavy and hard to displace.

- Step 2. Sharpening a problem statement you've lived: the Prompt Enhancer is a good forcing function for writing a taut description before you start.

- Step 3. Scoping the wedge into an AI spec: the CLAUDE.md Generator produces the memory file for the session where you build it.

- Step 4. Keeping scope honest: my own rule is that if I can't describe the wedge in one sentence with no "and"s, I haven't finished scoping it yet.

The shift, in one paragraph

Nothing about AI turns a weak idea into a good one. What AI changed is the ratio of "can you build?" to "do you know what to build?" Two years ago, a great idea with no engineering resources stayed in a notebook. Now, those same idea-holders are shipping. The scarce thing shifted from building to choosing what to build. The people who've been sitting inside a problem for years, in jobs no tech company was paying attention to, suddenly have everything they need to ship. If that sounds like you, the four questions are for you.

Names and projects referenced, CrossBeam, Postvisit.ai, TARA, are public record from Anthropic's 2026 Claude Code Hackathon and have been covered by several community write-ups, including a Medium piece by Jing Hu titled "A Lawyer Just Beat 500 Developers at Anthropic's Hackathon." The four-question framing and analysis in this post are mine; the builder examples are cited as public-record proof points.